AI Agent Harnesses Explained: Architecture, Ecosystem, and Multi-User Design

Models think. Harnesses are what give models hands, scope their memory, and decide what they're allowed to touch.

👋 Hi everyone, I am Hamza. I have 18 years of experience in building large scale Machine Learning ecosystems and I teach at UCLA and MAVEN, and founder of Traversaal.ai.

Today, I am joined by Aishwarya, a product builder obsessed with turning ideas into working tools, especially with AI in the mix.

Welcome to Edition #35 of a newsletter that 15,000+ people around the world actually look forward to reading.

We’re living through a strange moment: the internet is drowning in polished AI noise that says nothing. This isn’t that. You’ll find raw, honest, human insight here — the kind that challenges how you think, not just what you know. Thanks for being part of a community that still values depth over volume.

🎓 Want to learn about Claude Code?

Join us on May 30th, for a one-day workshop on Claude Code and ship your first agent!

AI Agent Harnesses Explained: Architecture, Ecosystem, and Multi-User Design

Key Takeaways

Agent = Model + Harness. Every production agent is two systems running in concert: a language model generating tool calls, and a harness deciding which of those calls are actually allowed to execute.

Harnesses do the work models cannot. File access, sandboxed execution, and audit logging live in the harness layer. The model never directly touches your filesystem — the harness is the only code that does.

Codex and Claude Code take opposite bets. OpenAI’s Codex isolates by spinning up cloud containers per task; Anthropic’s Claude Code runs locally and asks explicit consent on each consequential action. Both are valid for different workflows.

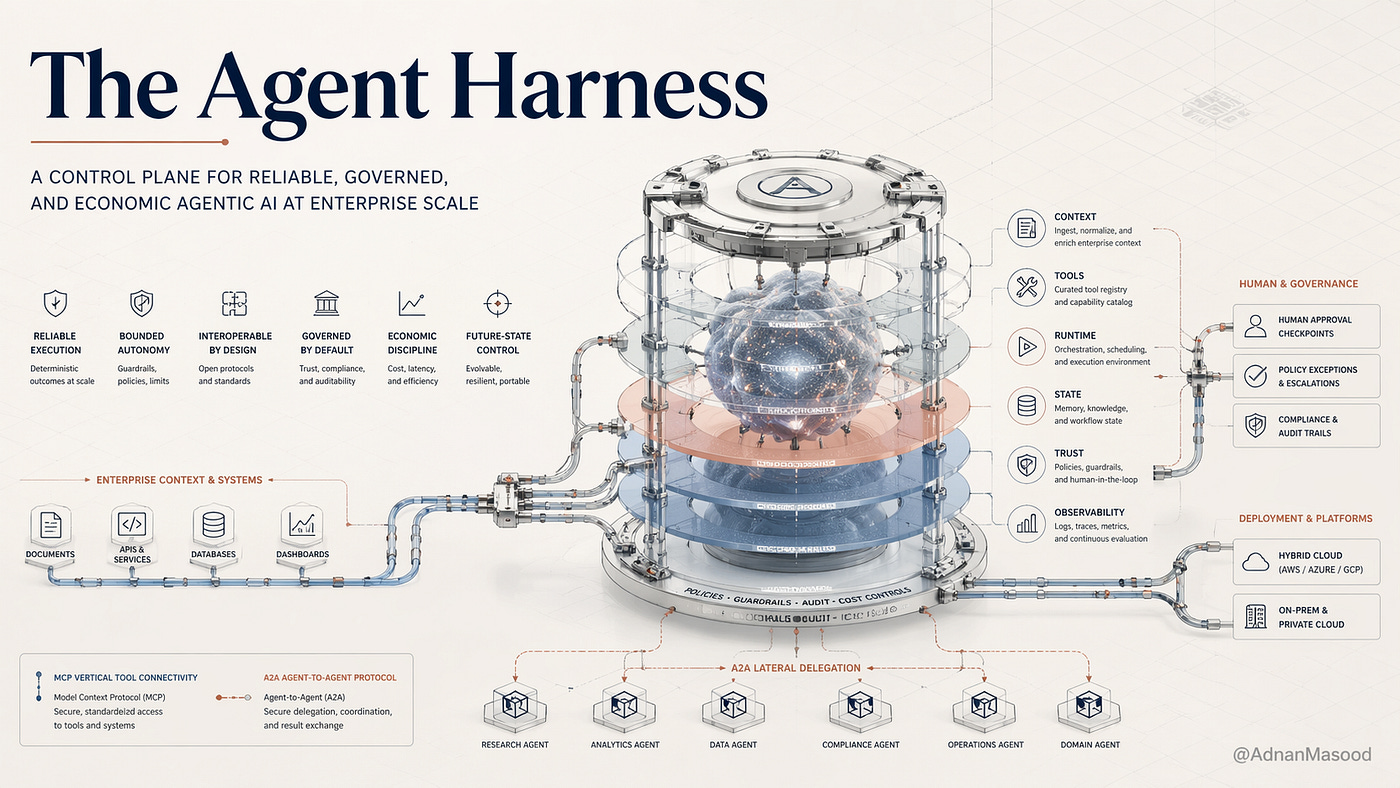

Multi-user is where harness design gets hard. Concurrent users force per-user permission inheritance, namespaced memory, and tamper-evident audit logs. Architectures that worked for one developer fail subtly when ten share an instance.

Safety lives in the harness, not the model. If you’re trusting the model to refuse bad actions, you have no safety. Refusals only count when the harness validates the tool-call schema and rejects it before execution.

Harness governance is now an org-design problem. Who configures the tool registry, who approves writes to shared memory, and who reviews audit logs are policy questions. Treating them as DevOps tasks is how teams end up with no policy at all.

Introduction

By May 2026 the limiting factor for production AI coding agents has stopped being the model.

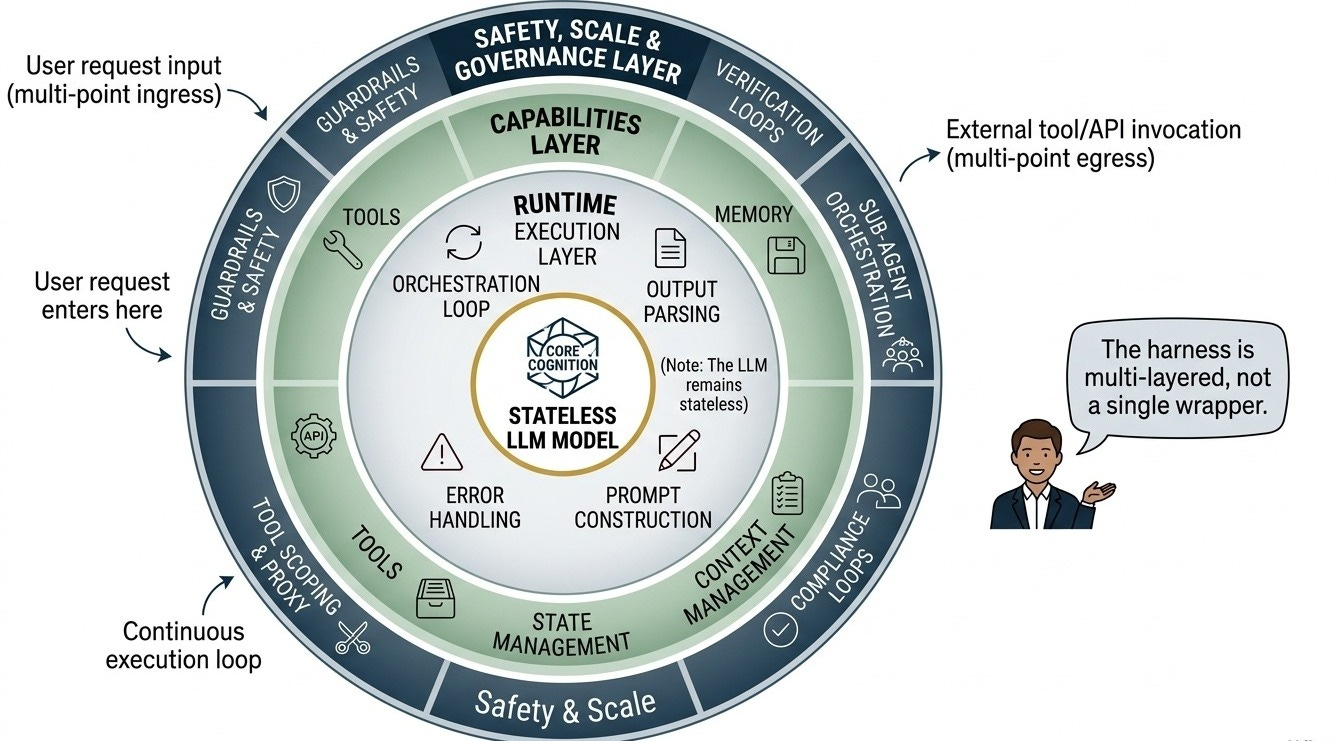

Frontier coding models post 60%+ on SWE-bench Verified and ship million-token contexts. The pieces that actually decide whether a team ships agent-powered features or produces expensive incidents are the runtime wrapped around the model: filesystem permissions, sandbox boundary, rollback path, audit log, per-user memory scope. That runtime is the agent harness.

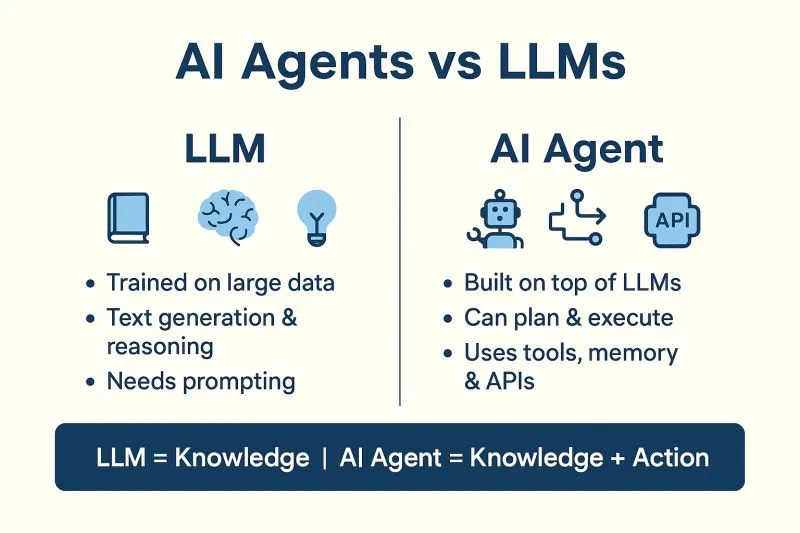

The shape of the problem is captured in a one-line formula: Agent = Model + Harness.

The model is the inference engine. It reads tokens, generates structured tool calls, and otherwise does nothing in the world. The harness is the execution environment that takes those calls, validates them, runs them inside an isolated workspace, and feeds structured results back. Anthropic’s own Claude Code best-practices post treats the harness as the layer where safety properties get enforced rather than decoratively applied around an already-safe model.

In 2026 the harness market has clarified into two reference designs. OpenAI’s Codex ships an open-source CLI plus a cloud agent that spins up a fresh container per task, isolating concurrent runs through process boundaries rather than coordination logic. Anthropic’s Claude Code ships a terminal-resident harness that runs locally and asks explicit consent on each consequential action, treating the developer as a co-signer on every meaningful tool call. They are not competitors so much as opposite design bets, useful precisely because they map cleanly to different workflow profiles.

What follows is a practitioner-grade breakdown of what an agent harness is, the five things it must do, how Codex and Claude Code actually implement them, and the design assumptions that break the moment you have ten developers sharing an instance instead of one.

1. What Is an AI Agent Harness? The Formula Most Teams Get Half Right

1.1 The Agent = Model + Harness formula unpacked

The model is the inference engine: it processes tokens and generates a probability-weighted output sequence. It has no native ability to open a file, remember last Tuesday’s refactor, or refuse to delete a production database. The harness is the surrounding execution environment that gives the model hands, it intercepts model-generated tool calls, routes them through a permission layer, executes them in a controlled environment, and feeds structured results back into the model’s context. Neither component is optional: a model without a harness is a sophisticated autocomplete engine, and a harness without a capable model is an empty scaffold.

Consider a surgical robot. The AI model is the surgeon’s decision-making intelligence, it perceives the situation and determines the action. The robotic arm is the harness, it makes contact with the physical world. The sterilization protocols, motion envelope limits, force sensors, and logging systems built into that arm aren’t surgeon responsibilities; they’re harness responsibilities. A brilliant surgeon operating a poorly built arm is dangerous. A well-built arm with a weak decision system is also dangerous. Both halves matter.

Most teams in 2024 and early 2025 invested heavily in the model half, selecting frontier models, tuning prompts, evaluating outputs, while treating the harness as something they’d build out later. The teams who built rigorous harness infrastructure are the ones running agents confidently in production now.

1.2 What the harness actually does: the five core responsibilities

Every production harness, regardless of implementation language or deployment model, must fulfill five core responsibilities.

First, tool execution: the harness intercepts model-generated tool calls and translates them into real system operations, file writes, shell commands, API invocations, then returns structured results to the model’s context.

Second, memory and context management: the harness decides what the agent remembers across turns, tasks, and sessions, including how it summarizes, evicts, and retrieves prior context.

Third, sandboxing: the harness isolates agent execution so that a malformed tool call, an adversarial prompt injection, or a simple programming error cannot cascade into irreversible damage to the systems the agent is operating on.

Fourth, state persistence: the harness maintains the agent’s working environment, open files, branch state, task progress, across interrupted or resumed tasks, so an agent can be paused and restarted without losing coherent context.

Fifth, permission enforcement: the harness determines which tools, files, repositories, and external APIs the agent is authorized to access, and enforces those boundaries at execution time rather than trusting the model to self-police.

These are architectural invariants. Any production agent system missing one is operating with either an open attack surface or an uncontrolled blast radius.

1.3 The spectrum of harness sophistication

Harnesses exist on a maturity spectrum, and knowing where your current implementation sits is the first step toward knowing what to build next.

Level 0 (Bare Invocation): The model receives a prompt and returns text. A human reads that text and manually executes any suggested actions. No harness infrastructure at all, just a chat interface and a clipboard.

Level 1 (Tool-Calling Wrapper): The model can invoke a predefined tool schema, and a thin wrapper executes those calls. No persistent memory between sessions, no sandboxing, no rollback. A mistake is permanent until a human fixes it manually.

Level 2 (Session-Aware Harness): Persistent memory within a session, basic rollback via snapshotting, and sandboxed execution for a single concurrent user. This is roughly where most well-engineered individual developer tooling sat in 2025.

Level 3 (Multi-User Production Harness): Full per-user execution isolation, scoped permission matrices, shared audit logs with per-user attribution, and robust concurrent agent support. This is what Codex and Claude Code deliver today, and it’s the baseline any serious engineering organization needs to be targeting.

1.4 Harness vs. framework vs. orchestrator: clearing up the confusion

Three terms regularly get conflated in practitioner conversations, and conflating them produces architectural mistakes.

An AI framework, LangChain, LlamaIndex, CrewAI, is a developer library that provides abstractions for building agent pipelines. It helps you wire together components, define chain-of-thought flows, and integrate with vector stores. It does not sandbox tool calls or enforce permissions. It’s a construction kit, not a job site.

An orchestrator, Temporal, Prefect, Airflow used in agent pipeline contexts, manages task sequencing, retries, and workflow state. It doesn’t understand agent-specific concerns like memory scoping, tool call injection prevention, or per-user isolation.

A harness is the runtime environment that actually executes agent actions, enforces constraints, manages memory, and provides the safety boundary between the model and the systems it operates on. A sophisticated harness may use a framework to structure its agent logic and an orchestrator to manage task queuing, but the harness is the higher-level concept that contains both.

If the model is a contractor: the framework is the project management software (Jira, Linear), the orchestrator is the job scheduler, and the harness is the actual job site, with locked tool cabinets, safety equipment, a building permit on the wall, and a foreman checking credentials before any tool leaves the shelf.